Key Takeaways

- TiDB X builds indexes at up to 5.5M rows/s — 72x faster than traditional online DDL.

- DXF splits index tasks across multiple nodes, scaling throughput near-linearly.

- Global Sort eliminates SST overlaps, reducing compactions and stabilizing TiKV performance.

- Elastic worker clusters offload index computation from production, keeping QPS and latency stable.

Adding an index has always been a sensitive operation:

- For small tables, TiDB Online DDL with Fast DDL (Ingest) already improved performance significantly up to 10× faster than traditional transactional DDL since v6.5.0, as we described in How TiDB Achieves 10x Performance Gains in Online DDL.

- However, as tables grow to hundreds of TBs and production clusters run more business workloads, the old approaches start to hit new limits:

- Single-node DDL owner can become a CPU/IO bottleneck.

- Distributed index builds introduce SST overlaps, causing compactions and extra load on TiKV.

- Even optimized ingest still consumes production cluster resources, risking QPS and latency fluctuations.

The challenge is clear: How do we build indexes that are fast, stable, and minimally disruptive, even at massive scale?

TiDB X, the latest version of TiDB that introduces dedicated object storage, addresses this problem through a combination of three major innovations:

- DXF (Distributed Execution Framework): Distributes index tasks across multiple nodes to dramatically improve performance.

- Global Sort: Globally orders index SST files before ingestion, reducing RocksDB compactions and stabilizing TiKV.

- Elastic Worker Clusters with Side-loading: Moves most index computation out of the production cluster, further minimizing impact on online workloads.

In this blog, we explain why each innovation was needed, how it works, and how together they achieve 5.5M rows/s with near-zero business impact.

DXF: Distributed Execution Framework

Why DXF? Fast DDL with Ingest removed the transactional bottleneck, but large tables still suffered from single-node limits:

- Only one DDL owner node executes the ADD INDEX job, which may max out the node’s CPU and I/O.

- Other cluster tasks experienced contention.

- Only one index build could run at a time.

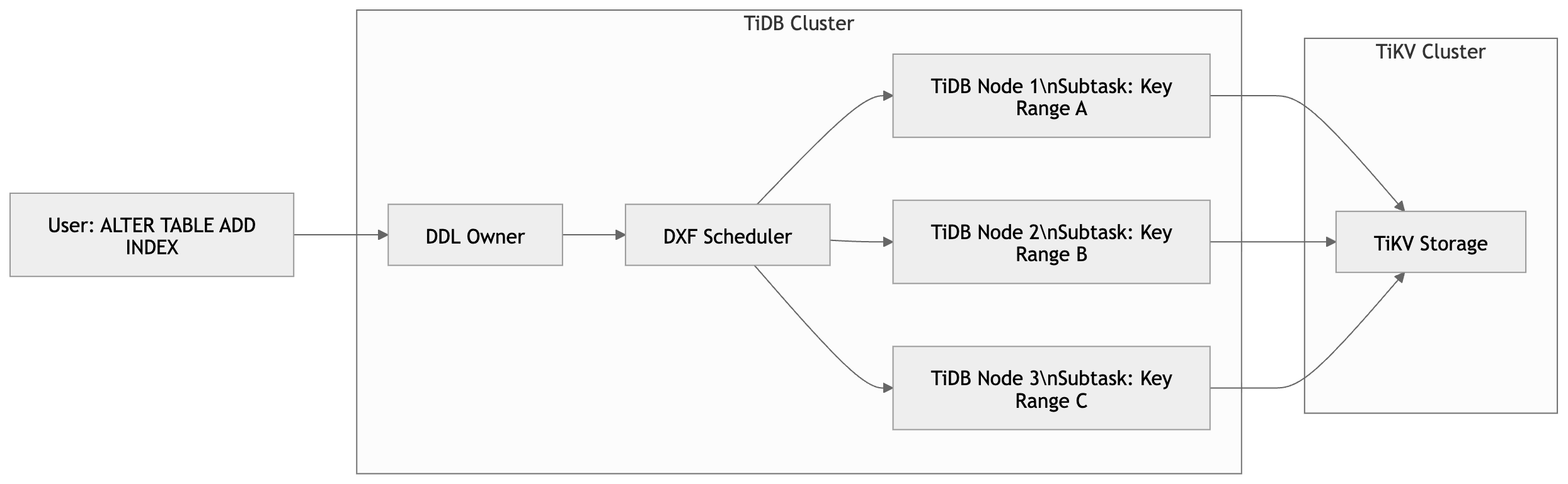

Solution: DXF splits a single Add Index task into multiple subtasks, which are scheduled across multiple TiDB nodes. Subtasks run in parallel.

How it works:

Figure 1: Scheduler distributing index tasks to nodes.

- Scheduler divides the table’s index key ranges into subtasks.

- Each TiDB node processes its subtasks independently, building local index data and ingest into TiKV.

- When all subtasks complete, TiDB merges and commits the results.

Benefits:

- Index build throughput scales near-linearly with node count.

- Large tables indexed much faster, without overloading a single node.

| Case | Index Creation Method | Rows | Node Count | Avg Time | Performance vs Transaction DDL |

| 1 | Transaction Online DDL | 1,000,000,000 | 1 | 12h 21m | 1× |

| 2 | Fast DDL | 1,000,000,000 | 1 | 30m | 24× |

| 3 | Fast DDL + (DXF) | 1,000,000,000 | 2 | 14–15m | 48× |

| 4 | Fast DDL + (DXF) | 1,000,000,000 | 3 | 10m | 72× |

Note: These numbers illustrate the relative performance differences between index creation strategies and may not represent all production environments.

Global Sort: Reducing RocksDB Impact

Why Global Sort? DXF distributes work but introduces a new problem: Overlapping Index SST files across nodes. This causes:

- Frequent compactions in RocksDB.

- Higher write amplification.

- Unpredictable load on TiKV.

Solution: Global Sort ensures all Index SST files are globally ordered before ingestion.

How it works:

- Scan & Metadata Collection – TiDB workers scan the table and locally sort blocks of key/value data. Along with the data blocks, they generate metadata describing the key ranges and offsets of each block and upload it to S3 so any node in the cluster can access it.

- Global Partitioning – The scheduler reads all metadata files and performs a global merge to determine balanced, non-overlapping key partitions. Each partition corresponds to a continuous key range, and the scheduler assigns it to a specific worker.

- Parallel Sorting & SST Generation – Workers download the data blocks belonging to their assigned partitions, perform a final merge-sort, and generate globally ordered SST files that can be safely ingested into RocksDB without key overlap.

Benefits:

- Eliminates SST overlaps.

- Reduces compactions and write amplification.

- Stabilizes TiKV performance.

- Supports one table up to 600 TB.

| Job ID | Table Data Size | Rows | Row Size | DXF Nodes | Node Specification | Index Column | Index Creation Time | Performance |

| 1 | 200 TB | 8 billion | 26.84 KB | 25 | 8 vCPU, 32 GB | bigint | 19min 10s | 6.96 M rows/s |

TiDB X: Elastic Worker Clusters & Side-Loading

Why Elastic Workers? Even with DXF + Global Sort, production nodes still perform part of the workload, which can affect QPS and latency in busy clusters.

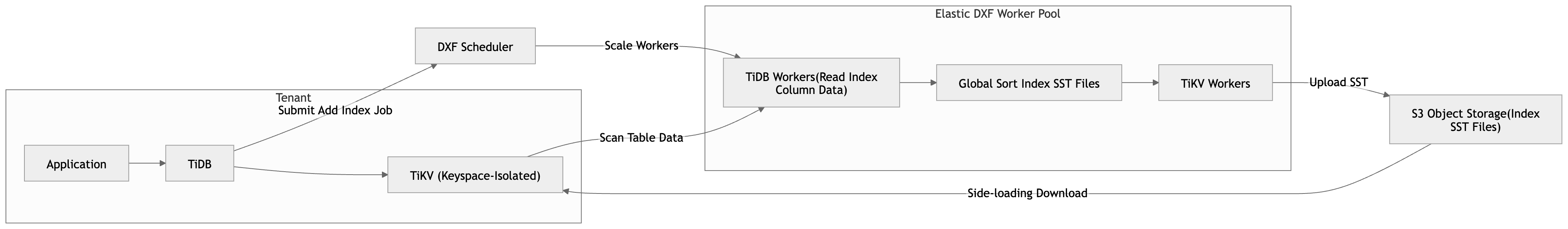

Solution: TiDB X dynamically provisions isolated, elastic worker nodes to handle index builds while decoupling index creation via side-loading:

Figure 2: TiDB X’s elastic worker nodes decoupling index creation.

- DXF automatically scales out the corresponding TiDB workers and TiKV workers based on the size of the index you’re adding.

- TiDB Workers process index column scanning, sorting, and index SST generation.

- TiKV workers upload SSTs to S3, and tenant production TiKV nodes download the index SST files asynchronously.

- The scheduler monitors task progress and dynamically scales workers in or out.

Benefits:

- Most of the computation is moved out of the production cluster.

- Peak Add Index throughput: up to 5.5M rows/s.

- Online QPS and P99 latency remain stable.

Note: During final SSTs download, tenant production TiKV still participates. For extremely large indexes or resource-constrained tenant TiKV nodes, minor IO/network contention may occur. Compared with traditional workflows, impact is dramatically reduced.

Conclusion: Add Index Should Not Be a Gamble

For years, adding an index on a large table meant the same ritual: Schedule a maintenance window, alert the on-call team, and hope nothing breaks. That era is over.

With DXF, Global Sort, and elastic worker clusters, TiDB X turns index creation from a calculated risk into a routine operation: 5.5M rows/s with near-zero impact on the workloads your users actually care about. No maintenance windows. No prayer-driven deployments.

And this is just one example of what becomes possible when TiDB online DDL infrastructure is redesigned for true distributed execution. We’re applying the same principles across TiDB X to make every large-scale schema change faster, safer, and more predictable.

Ready to experience fast, low-impact index creation? Try these capabilities in TiDB Cloud and see how TiDB X can accelerate operations in your production workloads.

Experience modern data infrastructure firsthand.

TiDB Cloud Dedicated

A fully-managed cloud DBaaS for predictable workloads

TiDB Cloud Starter

A fully-managed cloud DBaaS for auto-scaling workloads